This essay was first published at The Pathos of Things newsletter. Subscribe here.

The 1937 World Fair in Paris was the stage for one of the great symbolic confrontations of the 20th century. On either side of the unfortunately titled Avenue of Peace, with the Eiffel Tower in the immediate background, the pavilions of Nazi Germany and the Soviet Union faced one another. The former was soaring cuboid of limestone columns, crowned with the brooding figure of an eagle clutching a swastika; the latter was a stepped podium supporting an enormous statue of a man and woman holding a hammer and sickle aloft.

This is, at first glance, the perfect illustration of an old Europe being crushed in the antagonism of two ideological extremes: Communism versus National Socialism, Stalin versus Hitler. But on closer inspection, the symbolism becomes less clear-cut. For one thing, there is a striking degree of formal similarity between the two pavilions. And when you think about it, these are strange monuments for states committed, in one case, to the glorification of the German race, and in the other, to the emancipation of workers from bourgeois domination. As was noted by the Nazi architect Albert Speer, who designed the German structure, both pavilions took the form of a simplified neoclassicism: a modern interpretation of ancient Greek, Roman, and Renaissance architecture.

These paradoxes point to some of the problems faced by totalitarian states of the 1920s and 30s in their efforts to use design as a political tool. They all believed in the transformative potential of aesthetics, regarding architecture, uniforms, graphic design and iconography as means for reshaping society and infusing it with a sense of ideological purpose. All used public space and ceremony to mobilise the masses. Italian Fascist rallies were politicised total artworks, as were those of the Nazis, with their massed banners, choreographed movements, and feverish oratory broadcast across the nation by radio. In Moscow, revolutionary holidays included the ritual of crowds filing past new buildings and displays of city plans, saluting the embodiments of Stalin’s mission to “build socialism.”

The beginnings of all this, as I wrote last week, can be seen in the Empire Style of Napoleon Bonaparte, a design language intended to cultivate an Enlightenment ethos of reason and progress. But whereas it is not surprising that, in the early 19th century, Napoleon assumed this language should be neoclassical, the return to that genre more than a century later revealed the contradictions of the modernising state more than its power.

One issue was the fraught nature of transformation itself. The regimes of Mussolini, Hitler and Stalin all wished to present themselves as revolutionary, breaking with the past (or at least a rhetorically useful idea of the past) while harnessing the Promethean power of mass politics and technology. Yet it had long been evident that the promise of modernity came with an undertow of alienation, stemming in particular from the perceived loss of a more rooted, organic form of existence. This tension had already been engrained in modern design through the medieval nostalgia of the Gothic revival and the arts and crafts movement, currents that carried on well into the 20th century; the Bauhaus, for instance, was founded on the model of the medieval guild.

This raised an obvious dilemma. Totalitarian states were inclined to brand themselves with a distinct, unified style, in order to clearly communicate their encompassing authority. But how can a single style represent the potency of modernity – of technology, rationality and social transformation – while also compensating for the insecurity produced by these same forces? The latter could hardly be neglected by regimes whose first priority was stability and control.

Another problem was that neither the designer nor the state can choose how a given style is received by society at large. People have expectations about how things ought to look, and a framework of associations that informs their response to any designed object. Influencing the public therefore means engaging it partly on its own terms. Not only does this limit what can be successfully communicated through design, it raises the question of whether communication is even possible between more radical designers and a mass audience, groups who are likely to have very different aesthetic intuitions. This too was already clear by the turn of 20th century, as various designers that tried to develop a socialist style, from William Morris to the early practitioners of art nouveau in Belgium, found themselves working for a small circle of progressive bourgeois clients.

Constraints like these decided much about the character of totalitarian design. They were least obvious in Mussolini’s Italy, since the Fascist mantra of restoring the grandeur of ancient Rome found a natural expression in modernised classical forms, the most famous example being the Palazzo della Civiltà Italiana in Rome. The implicit elitism of this enterprise was offset by the strikingly modern style of military dress Mussolini had pioneered in the 1920s, a deliberate contrast with the aristocratic attire of the preceding era. The Fascist blend of ancient and modern was also flexible enough to accommodate a more radical designers such as Giuseppe Terragni, whose work for the regime included innovative collages and buildings like the Casa Del Fascio in Como.

The situation in the Soviet Union was rather different. The aftermath of the October Revolution of 1917 witnessed an incredible florescence of creativity, as artists and designers answered the revolution’s call to build a new world. But as Stalin consolidated his dictatorship in the early 1930s, he looked upon cultural experimentation with suspicion. In theory Soviet planners still hoped the urban environment could be a tool for creating a socialist society, but the upheaval caused by Stalin’s policies of rapid industrial development and the new atmosphere of conservatism ultimately cautioned against radicalism in design.

Then there was the awkward fact that the proletariat on whose behalf the new society would be constructed showed little enthusiasm for the ideas of the avant garde. When it came to building the industrial city of Magnitogorsk, for instance, the regime initially requested plans from the German Modernist Ernst May. But after enormous effort on May’s part, his functionalist approach to workers’ housing was eventually rejected for its abstraction and meanness. As Stephen Kotkin writes, “for the Soviet authorities, no less than many ordinary people, their buildings had to ‘look like something,’ had to make one feel proud, make one see that the proletariat… would have its attractive buildings.”

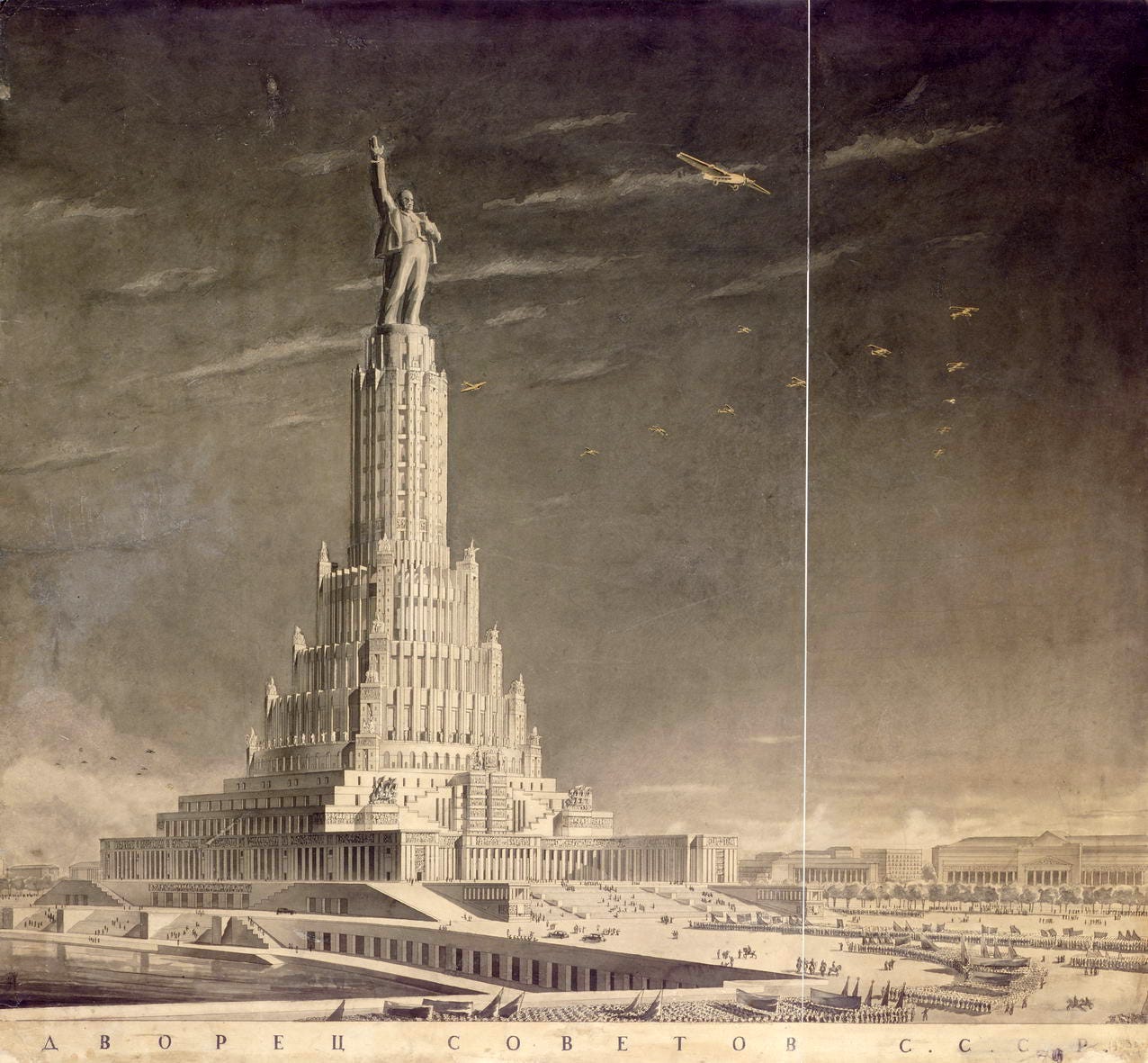

By the mid-1930s, the architectural establishment had come to the unlikely conclusion that a grandiose form of neoclassicism was the true expression of Soviet Communism. This was duly adopted as Stalin’s official style. Thus the Soviet Union became the most reactionary of the totalitarian states in design terms, smothering a period of extraordinary idealism in favour of what were deemed the eternally valid forms of ancient Greece and Rome. The irony was captured by Stalin’s decision to demolish one of the most scared buildings of the Russian Orthodox Church, the Cathedral of Christ the Saviour in Moscow, and resurrect in its place a Palace of the Soviets. Having received proposals from some of Europe’s most celebrated progressive architects, the regime instead chose Boris Iofan to build a gargantuan neoclassical structure topped by a statue of Lenin (the project was abandoned some years later). Iofan himself had previously worked for Mussolini’s regime in Libya.

If Stalinism ended up being represented by a combination of overcrowded industrial landscapes and homages to the classical past, this was more stylistic unity than Nazi Germany was able to achieve. Hitler’s regime was pulled in at least three directions, between its admiration for modern technology, its obsession with the culture of an imagined Nordic Volk (which, in a society traumatised by war and economic ruin, functioned partly as a retreat from modernity), and Germany’s own tradition of monumental neoclassicism inherited from the Enlightenment. Consequently there was no National Socialist style, but an assortment of ideological solutions in different contexts.

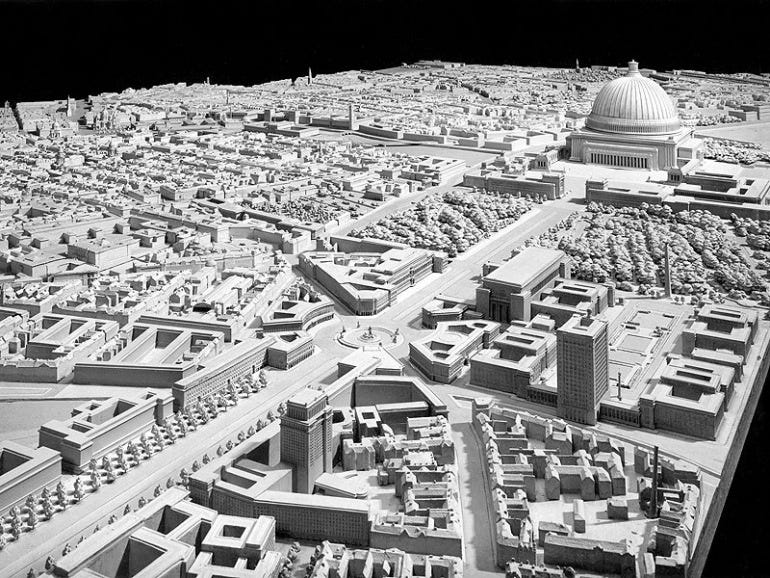

Despite closing the Bauhaus on coming to power in 1933, the Nazis imitated that school’s sleek functionalist aesthetic in its industrial and military design, including the Volkswagen cars designed to travel on the much-vaunted Autobahn. Yet the citizens who worked in its modern factories were sometimes provided housing in the Heimatstil, an imitation of a traditional rural vernacular. Propaganda could be printed in a Gothic Blackletter typeface or broadcast through mass-produced radios. But the absurdity of Nazi ideology was best demonstrated by the fact that, like Stalin, Hitler could not conceive of a monumental style to embellish his regime that did not continue in the cosmopolitan neoclassical tradition inspired by the ancient Mediterranean. The cut-stone embodiments of the Third Reich, including Hitler’s imagined imperial capital of Germania, were projected in the stark neoclassicism of Speer’s pavilion for the Paris World Fair. It was only in the regime’s theatrical public ceremonies that these clashing ideas were integrated into something like a unified aesthetic experience, as the goose-stepping traditions of Prussian militarism were updated with Hugo Boss uniforms and the crypto-Modernist swastika banner.

Of course it was not contradictions of style that ended the three classic totalitarian regimes; it was the destruction of National Socialism and Fascism in the Second World War, and Stalin’s death in 1953. Still, it seems safe to say that no state after them saw in design the same potential for a transformative mass politics.

Dictatorships did make use of design in the later parts of the 20th century, but that is a subject for another day. As in the western world, they were strongly influenced by Modernism. A lot of concrete was poured, some of it into quite original forms – in Tito’s Yugoslavia for instance – and much of it into impoverished grey cityscapes. Stalinist neoclassicism continued sporadically in the Communist world, and many opulent palaces were constructed, in a partial reversion to older habits of royalty. Above all though, the chaos of ongoing urbanisation undermined any pretence of the state to shape the aesthetic environment of most of its citizens, a loss of control symbolised by the fate of the great planned capitals of the 1950s, Le Corbusier’s Chandigarh and Lúcio Costa’s Brasilia, which overflowed their margins with satellite cities and slums.

In the global market society of recent decades, the stylistic pluralism of the mega-city is the overwhelming pattern (or lack of pattern), seen even in the official buildings of an authoritarian state like China. On the other hand, I’ve recently argued elsewherethat various repressive regimes have found a kind of signature style in the spectacular works of celebrity architects, the purpose of which is not to set them apart but to confirm their rightful place in the global economic and financial order. But today the politics of built form feel like an increasingly marginal leftover from an earlier time. It has long been in the realm of media that aesthetics play their most important political role, a role that will only continue to grow.

This essay was first published at The Pathos of Things newsletter. Subscribe here.